- AI Explained, AI Reliability & Accuracy, AI Trends & Insights, Future of AI

The Unsaid: We’re Back-Seating the Real AI Revolution

- Written By

Shailja Tarungal

Ever had that one friend who speaks with complete confidence even when they are clearly making things up?

That friend is AI.

Models like GPT, Claude, and Gemini are not intelligent in the human sense. They do not understand context the way we do. They are simply next-word prediction machines and hence, operate on probability, not logic or reasoning.

Let me explain how this works in simple terms.

How AI Generates Responses:

Think of this line: “The sun rises in the…”

Your brain fills in “east” almost instantly. You did not calculate it. You just knew. You have read or heard it enough times.

That is exactly how AI models work. They see billions of examples and learn how language flows. Not by understanding meaning, but by predicting patterns.

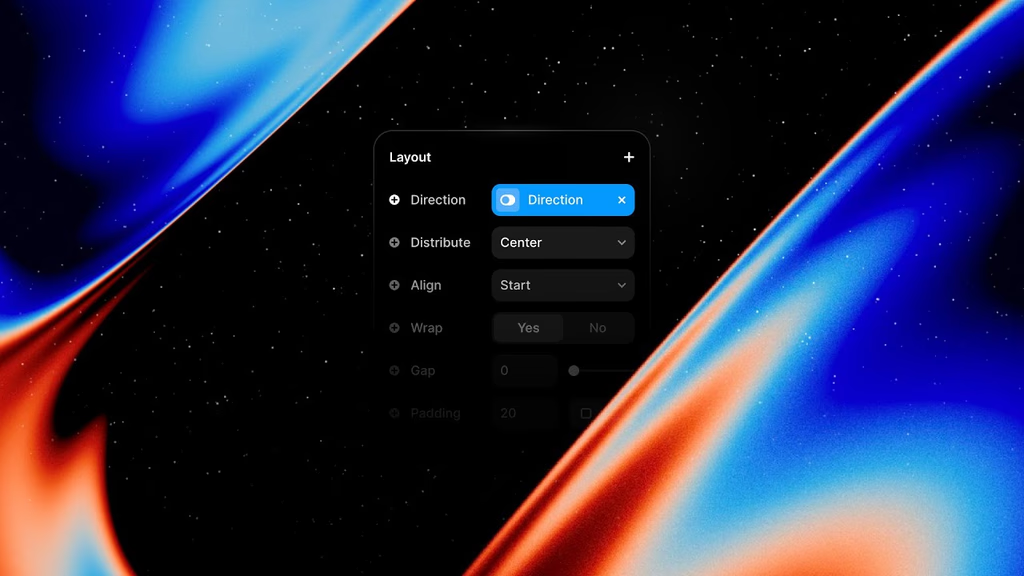

They use deep learning, specifically transformer architecture, which helps them scan sequences of words and figure out what is most likely to come next.

Given that, here is how the system functions at its core:

Pattern Recognition Using Large-Scale Training: AI is trained on massive datasets made up of books, websites, research papers, and more. It learns how words tend to follow each other in different scenarios. Which is exactly, the base of everything.

Context Awareness Through Attention Mechanism: Unlike older chatbots, these models use what is called an attention mechanism. This allows them to understand and retain context throughout a long conversation. This is why it feels like the AI understands you, even though it does not.

Fine-Tuning Using Human Feedback: After the model is trained, it goes through a stage called Reinforcement Learning with Human Feedback. Humans interact with the model and rate its responses. Based on that, the system is adjusted to give more appropriate and human-like outputs.

Yes, AI Hallucinates!

If you have ever used AI (which of course, you did, silly me!) and received an answer that sounded right but was completely false, that is called a hallucination.

This happens due to a few reasons, like:

- The model has no access to real-time facts unless it is connected to a search engine or a database. So, it relies entirely on training data, which might be outdated or incomplete.

- When the AI does not know the correct answer, it does not admit that (haha, trying to be just as us). It simply predicts what would be the most likely response based on past patterns, which often result in convincing but incorrect answers.

- If the training data contains bias, incorrect facts, or assumptions, the model learns and reflects those with equal confidence.

Making AI More Reliable

There are some methods being adopted to reduce hallucinations and improve accuracy.

Retrieval-Augmented Generation (RAG):This method allows the model to access external sources, like search engines or internal databases, in real-time. It retrieves the most relevant documents and then generates a response based on them. This improves the accuracy and reliability of the output. Just as you’ve been making the most out of GPT-4 model.

High-Quality and Domain-Specific Training: Models trained on high-quality, verified data specific to an industry tend to perform much better. For example, a financial model trained on regulated financial reports will be more accurate in that domain.

Prompt Engineering and Guardrails: Writing better prompts and setting boundaries for the model’s response format help limit hallucinations. This is called prompt engineering.

Human-in-the-Loop Systems: For that absurd question – “Will AI replace my job?” You have to know that even with all the advancements, there is still a need for human oversight. In sectors like medicine, law, and finance, AI should never be left to operate independently. Human review is essential.

So, AI definitely is NOT THINKING but PREDICTING.

It is a system built on probabilities, patterns, and structured sequences. When used correctly, it can provide powerful insights, improve productivity, and unlock new ways of working. But it is still far from being a reliable source of truth.

As developers, users, and decision-makers, we need to be clear about what AI can and cannot do. The responsibility lies with us to train, refine, and deploy it in a way that adds value without compromising accuracy.

The real question is whether we are guiding AI toward responsible use or simply feeding more data into a system that sounds intelligent but has no real understanding.

I would be interested in hearing your thoughts and experiences with this. Till then, take care of you and yours.

Think your business hit a wall? We see a door.

We believe there’s no such thing as a bad business – only untapped potential. With the right guidance, every venture can find

its rhythm. We bring clarity, creativity, and strategy to help you move forward – faster, smarter, stronger.